I recently built a DZ65RGB keyboard to replace my TADA68 and I’ve been thrilled with it, but it is lacking one major feature: it has no underglow support. So I undertook a challenge to add high quality LEDs to the underside of my favorite TADA68 case, powered through the DZ65RGB PCB, and controlled by the keyboard microcontroller (an STM32 ARM Cortex) running the QMK firmware. While underglow is a configuration setting in QMK for many AVR microcontrollers, it is not supported out of the box for the STM32 ARM microcontroller, and it conflicts with the individually addressable RGB LEDs. This is a detailed tutorial on the hardware and software required to enable additional dimmable lights to any STM32 keyboard with full production-quality integration.

[Read More]Custom Keyboard - DZ65RGB

My previous TADA68 build mysteriously bit the dust, so I decided to build a new keyboard and reuse my TADA68 aluminum case. Thankfully I found a fantastic (nearly) drop-in replacement PCB: the DZ65RGB. It is another 65% keyboard like the TADA68, and has my most desired improvements over the TADA68: individually addressable RGB LEDs, USB-C, hot-swappable switches, and a lovely purple and gold color scheme!

[Read More]Custom Keyboard - TADA68

As I learned more about the world of mechanical keyboards, I wanted to experiment with new switches, play with new keycaps, and most importantly try my hand at building my own.

[Read More]Fez Viewer

Fez Viewer is a tool that can load the models and levels from Fez, and allow you to freely inspect and fly through them. It currently supports loading individual art objects, animation sets, and even entire levels.

[Read More]Cull Shot

Cull Shot is a single player time attack shooter. It was created for Ludum Dare 29, with the theme “Beneath the Surface”. The player holds a button to charge up a “cull shot”; the longer you hold the button the further the shot is fired. The shot reveals red cubes underneath a diagonal landscape. Pressing another button will capture these cubes, each cube extending the time limit. The objective is simply to last as long as possible.

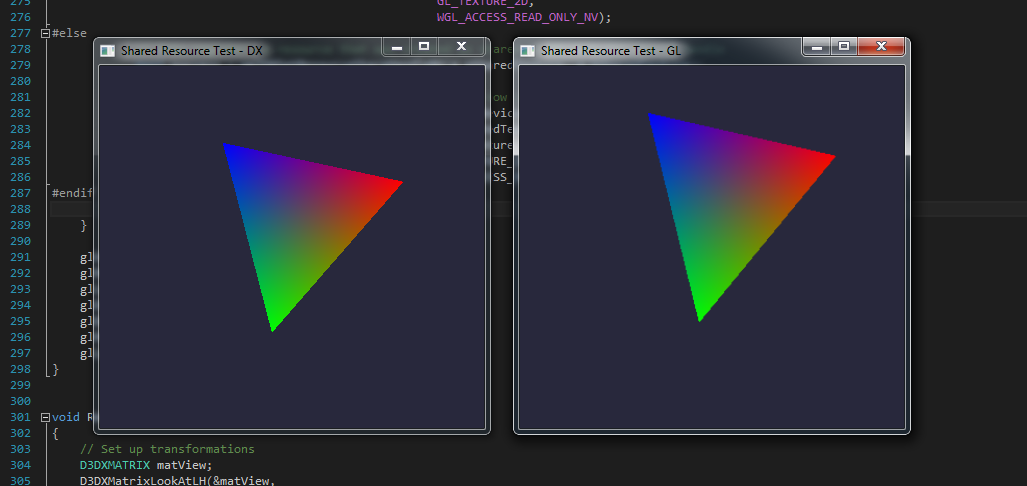

[Read More]Sharing Resources Between DirectX and OpenGL

I’ve recently had a need to simultaneously render using both DirectX and OpenGL. With this technique it is possible to efficiently perform some rendering operations within one API for part of an image, and switch to the other API for another part of the image. It can also be used to perform all rendering in a specific API, while presenting that final render target using another API. Providing direct access to graphics memory between APIs allows efficient and optimal sharing.

[Read More]Monitor Overclock

In late 2012 I decided to try out an imported Korean Yamakasi 27”; 2560x1440 IPS display. These have become particularly popular because of the high quality panels (the same as the Dell and Apple high-end monitors) at less than half the price, with some early models capable of overclocking to 120Hz. I decided the benefits outweighed the risks of spending so much money on a monitor with essentially no warranty. The panel was excellent, with no backlight bleed, excellent color, no dead pixels, and good response time. Unfortunately after 1 year the DVI board fried, and I ended up with similar problems to the one below:

[Read More]Aggrogate

Aggrogate is a 2 player action puzzle game. Each player controls light and dark cubes (light/dark purple or light/dark green). They must match 4 cubes of the same color to clear those cubes. The first play to clear all of their colored cubes wins. The trick is, each player is playing on the same collection of cubes, and shooting it causes it to spin, for both players!

[Read More]Zero Zen

Zero Zen is a 2-4 player game, most easily described as “sumo wrestling with airplanes”. Each player controls a plane with left/right rotation, a thruster to move forward, and a “shoot” button which fires a shotgun blast with tremendous kickback. The flying physics works similar to Asteroids, where your ship will continue coasting after accelerating. The bullets don’t do any damage, they just push players around. The objective is to knock all opponents into the outer walls to be the last person standing.

[Read More]Gameboy Backlight

“Chiptune” is a term used to describe music created using specifically limited hardware, usually for classic computer equipment like the Gameboy, the C64, etc. I’ve always found chiptune music interesting, and wanted to try my hand at making some with an original gameboy and LSDJ. Since I wanted to play around with it before bed, I decided to install a backlight in my gameboy, from KitschBent.

[Read More]